Provisioning an IBM Storwize V7000 using OpenStack Cinder and IBM Cloud Manager

The IBM Storwize V7000 is an incredibly versatile storage system that's been highly popular with our clients over the years. With IBM as one of the founding members of the OpenStack Foundation (in 2012), the Storwize line has featured OpenStack capabilities for many years and IBM is one of the leading contributors to the OpenStack project every year.

In lockstep, Mark III and our team of engineers has helped to support our clients that have chosen to deploy OpenStack for at least part of their environment, mostly to operate developer-centric private clouds. The following is a very short tutorial on provisioning and mapping a volume on a Storwize V7000 in our lab via OpenStack Cinder and IBM Cloud Manager to a host that we'll be using for Docker (for our digital development team to work from)-- the next blog entry in our series will show how we use some of Docker's tools to manage containers from this very environment (stay tuned!).

V7000 and OpenStack Cinder Tutorial:

First, we logged into the IBM Cloud Manager dashboard and created a volume using OpenStack Cinder to be used by an instance within our Docker environment:

Second, just to verify that everything looks good, we logged onto the Storwize V7000 GUI to view the audit log. As expected, the V7000 Cinder driver automatically connected to the V7000 and created the volume.

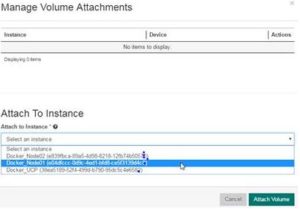

Next, we selected the new volume in the OpenStack dashboard on IBM Cloud Manager and attached it to one of our Docker tenant instances:

Fourth, in the previous step, we chose to attach the volume to the instance titled "Docker_Node01." For testing purposes, we just wanted to confirm which compute node Docker_Node01 is running on, so we queried nova services from the CLI to find out. The output tells us which compute node it is running on:

Fifth, back in the dashboard, we can see that OpenStack Cinder attached the volume to the Docker_Node01 Ubuntu instance on /dev/vdb:

Next, the V7000 audit log shows us that OpenStack Cinder automatically detected which compute node is hosting Docker_Node01, created the host definition on the V7000 along with the node's WWPNs and then mapped the volume to the newly created host.

Last, to verify that the volume is now mounted to the OpenStack instance, we logged into Docker_Node01 to check the output of the lsblk command. Sure enough, there is our 10GB volume, as expected, mounted to /dev/vdb. Success!!

We have OpenStack Cinder scenarios on various storage platforms, as well as many other OpenStack and private cloud demos running in our demo center/lab, so please feel free to reach out if there is anything you'd like to see!