Infrastructure Insights: Securing VMware starts with the CPU!

CPU Exploits! VMware shows low CPU utilization, but reported performance is bad due to high CPU %RDY

I've seen this quite a few places, but YMMV. Share your criticism or thoughts with me!

Typically, servers lifecycle every 3-4 years, but why lifecycle when VMware clusters are going strong? Maybe you’re getting great performance and you still have free resources?

If you’re looking to maximize your organization’s security posture (who isn’t these days?), with pretty big performance implications, consider this. Only the latest Cascade Lake Xeon CPUs, Platinum 8200, Gold 6200/5200, Silver 4200, or AMD EPYC, seem to be immune. But chances are extremely high you aren't running those new processors unless your clusters are new.

It all seemed to start in January 2018 when the media swirled up security buzz with news of Spectre and Meltdown CPU exploits. Soon more CPU vulnerabilities followed.

CVE Details. www.cvedetails.com Great site. "The Ultimate Security Vulnerability Datasource"

CVE Details Intel Xeon Vulnerability list:

https://www.cvedetails.com/vulnerability-list/vendor_id-238/product_id-42312/Intel-Xeon.html

VMware response to ‘L1 Terminal Fault - VMM’ (L1TF - VMM) Speculative-Execution vulnerability in Intel processors for vSphere: CVE-2018-3646 (55806)

https://kb.vmware.com/s/article/55806

Implementing Hypervisor-Specific Mitigations for Microarchitectural Data Sampling (MDS) Vulnerabilities (CVE-2018-12126, CVE-2018-12127, CVE-2018-12130, and CVE-2019-11091) in vSphere (67577)

https://kb.vmware.com/s/article/67577

Many have implemented the CPU vulnerability mitigations in VMware vSphere/ESXi. VMware Health and skyline will even alert you if you have not. But many places are not using skyline, or even on recent versions of vSphere. If you not running 6.5U3, 6.7U3, or 7.0U1 then you need to be updated for many reasons beyond just security. Stop reading this and focus your version first.

Now if you’re on one of the latest versions, 6.5U3, 6.7U3, or 7.0U1

You're going to need to move off the default (highest performance scheduler) and implement a new ESXi Side-Channel-Aware Scheduler, whether SCAv1 or SCAv2. Performance implications can be huge. SCAv2 seems to be VMwares recommendation as the performance impact is much less severe then SCAv1, but organizations should understand the security implications of SCAv2 versus SCAv1 and make the decision that best fits. Note you will need to be at least 6.7 to use SCAv2.

I've seen huge performance impact with both SCAv1 and SCAv2 in the real world with vCPU scheduling when customers create even a few VMs with 8 vCPUs or more. Spreading these out and limiting the quantity can help.

If you don’t mind getting your hands dirty with powershell and VMware's PowerCLI check out the HTAware Mitigation Tool.

https://kb.vmware.com/s/article/56931

This will examine and advise you of the potential impact of closing your vulnerabilities.

So, what have I been seeing after implementation of SCAv1 or SCAv2?

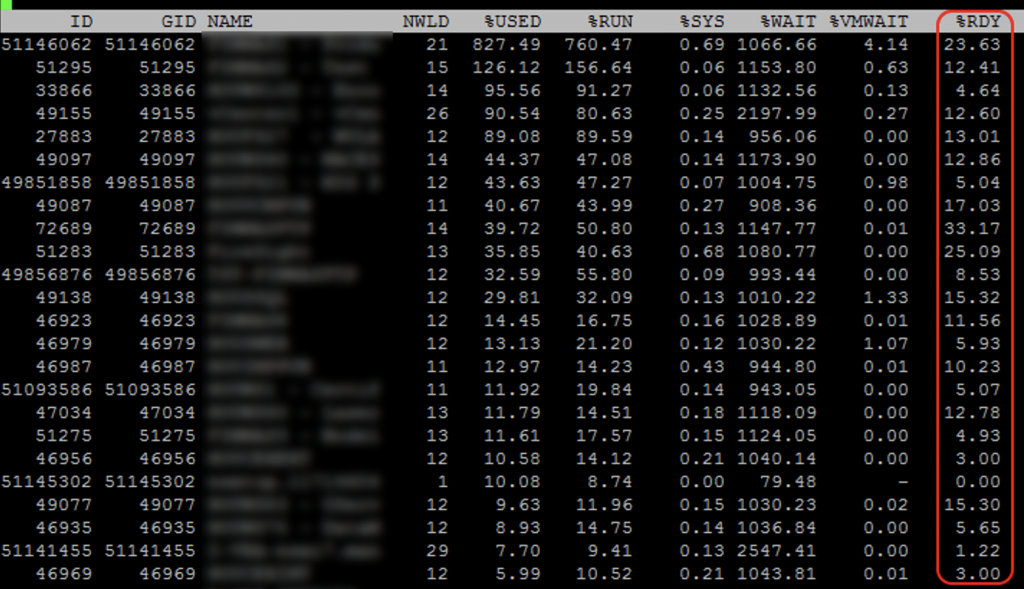

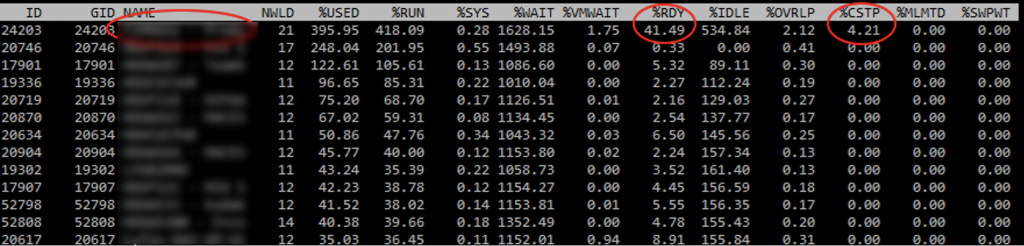

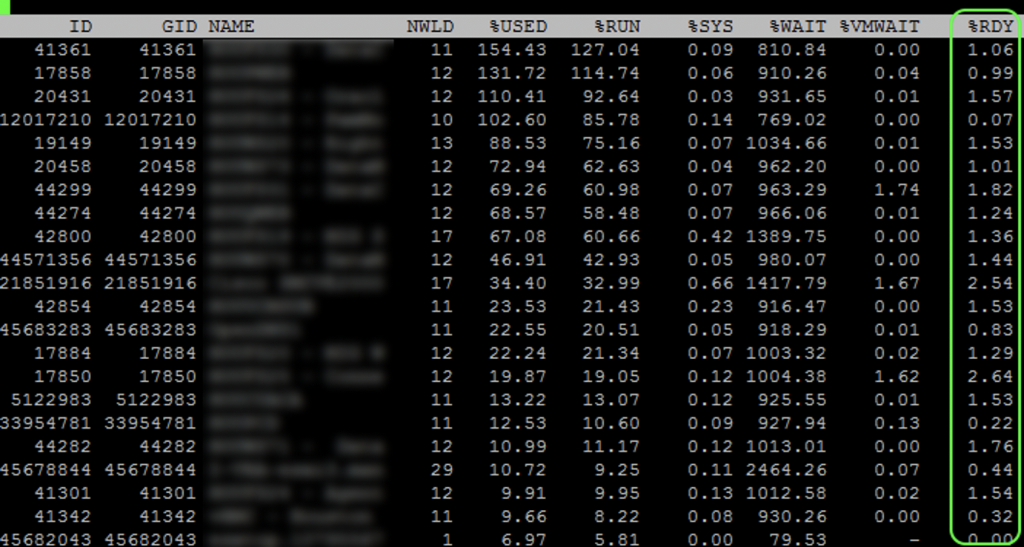

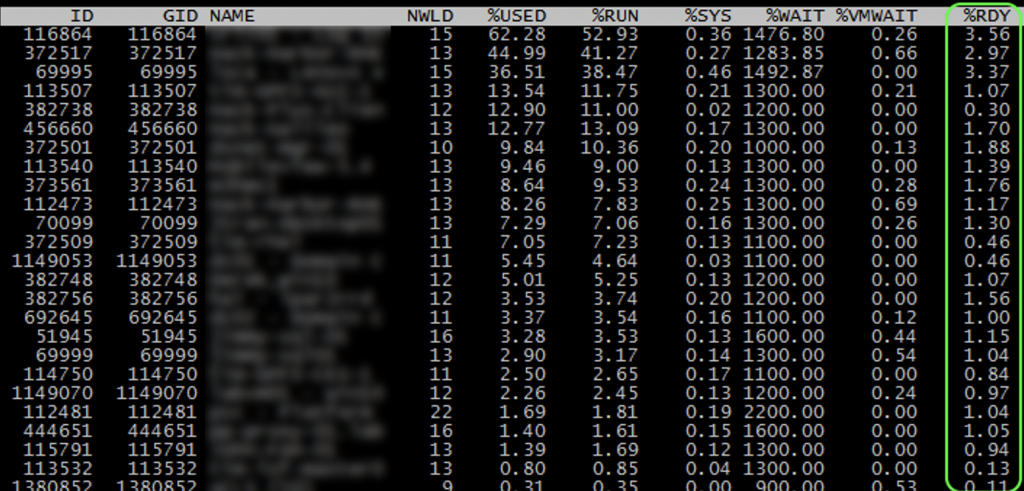

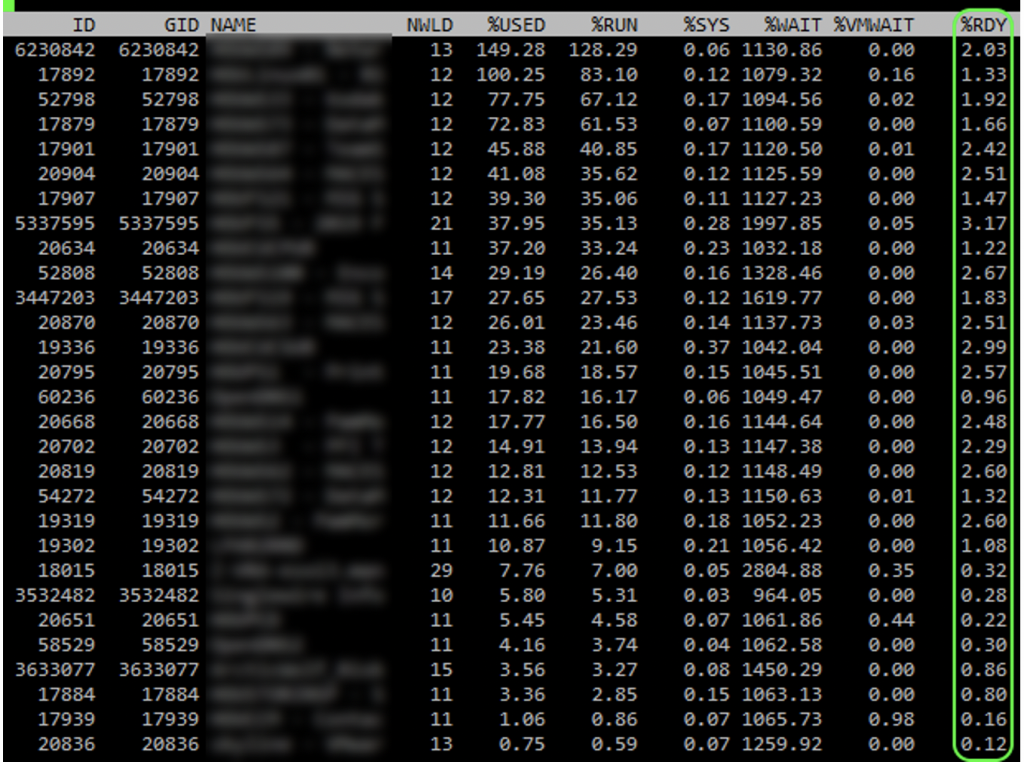

In vCenter and ESXi you see low CPU utilization and everything looks great, but performance is sluggish, even for 2/4 vCPU VMs! People complain and are asking for more cores and things don’t really change or get worse. How is this possible, your CPU utilization on your physical host is low! SSH into quite a few of your ESXi servers and run esxtop. Type capital V to just show VMs and look at your CPU %RDY. Is your %RDY for everyone around or under 1%? If so awesome! Is it over 2% under 5%? Not great. Are you seeing over 5%, or even an insanely high 10-40%?

If you VMotion just 1 or 2 of the 6/8 or larger vCPUs VMs to other hosts does the %RDY immediately drop down considerably?

Its beyond the scope here but understand the principal that performance of a VM guest gets worse when it has an excess of unused vCPU cores. This is anti-intuitive for most.

Identify any VMs with 8 or more vCPUs. Evaluate if they really need that many based on historical usage. Reduce if possible. Even if you have a large quantity of VMs.

I also highly recommend you keep your vCPU quantity for each VM under the NUMA node size for your host. Typically, but not always the physical core value of a single socket.

People seem to hate on 4 socket boxes in favor of 2 socket, but due to vCPU scheduling you can often get many more VMs with higher vCPU counts having much less of an impact of scheduling.

While this article focuses on Intel, I'm still huge fan of both Intel and AMD. AMD has the advantage of 64 core CPUs, 128 in a two socket. That's 256 with SMT in a 2 socket!

WHAT ABOUT YOUR FIRMWARE?

Often overlooked! Many CPU fixes, AMD and Intel, come in firmware/microcode updates. Lights out advanced management and uefi also get security updates through firmware. Are you monitoring your servers and storage continuously over the months for available updates? You should be. Many products exist to help and automate this.

There are dozens of other optimizations and factors that can negatively affect or optimize CPU performance of your VM guest and Physical host. I’ll cover some in a future post.